ABSTRACT: The purpose of this whitepaper is to answer the question “Can a distributed team work as efficiently as a team based in a central location?” I will aim to answer this question by identifying best practice, why a collocated team works, what can go wrong and mitigating actions that can be applied with a distributed team, many of which are contained within the Case Study.

Starting Point

With the online evolution, gone are the days where a company is limited by the country that they started operating in. It has enabled more and more companies to be able to set up in multiple countries and manage the distributed companies as one rather than a series of siloed smaller companies.

The benefits brought to companies and how they operate has also brought with it a larger market for development and testing services, previously this would be provided by teams located either in the immediate area or by teams working on client sites, but now the teams can be located anywhere in the world, often in multiple countries.

At this point it is important to discuss why a company would consider using teams who are not co-located, the driver for teams to be located in multiple locations or countries is not quality but cost, as to obtain the best quality it has been proven that the Development and Test team should be collocated. This is not to say that a company who chooses to take the option of a distributed team does not also expect the delivered product to be of high quality, which is the conundrum we face.

By comparing the pros and cons between a co-located team and a distributed team it is possible to select the right processes and procedures to ensure delivery within time and to budget when using a distributed team.

Co-located Team

Studies have shown that to have the optimum efficiency between Test and Development you need to have both teams co-located, I myself have been involved in Accelerated Delivery projects where Test, Development & BA’s sit together within a pod (enclosed seating area). Although initially this feels a forced environment very quickly it breaks down the barrier between Development, Test and Business Analysts as you feel as one team.

From a testing perspective there are many benefits, but the key areas are as follows: Meetings, Defect Resolution & Code deployment, as in the following examples.

Meetings – Each morning the team has a quick 10/15 min meeting, this enables each member to discuss previous days progress and plans for the current day highlighting any blockers. This instils confidence between team members (Dev, Test & BA) as each person understands what the rest of the team are working on and encourages team members to provide a support network for each other where possible.

Defect Resolution – By the team being physically close to each other it enables any anomalies found by test to be discussed, at the time while it is fresh in the testers mind, with development and validated by the Business Analyst. As part of this process the issue can be clearly discussed so that all parties are very clear on the testers understanding and expectations of the system. This process greatly reduces the number of defects raised where the system is working as per requirement. This type of non-defect tends to be on new systems and can very quickly burn a lot of developments time and so its important to reduce these as much as possible.

Code Deployment – It is always critical that development work with the test team in the following areas

- Address defects from a testing priority where possible

- Provide a release note ahead of implementing a build so that the test team can prepare to retest defects to be delivered

The more in sync that Test and Development teams are within these areas the more proficient the retest process.

Now that we have identified why a collocated team works so well, I want to look at a distributed team, in particular an actual project that I was involved in recently, all will be revealed in the Case Study.

Case Study

For one of our customers I was asked to provide support where a distributed model had been used, with Development in Germany and the Test team, Business Analysts and System were based in Australia.

System testing had already been completed by the vendors within their own testing environments but this had not been managed centrally, so confidence was low with regards to coverage. System Integration Testing (SIT) had been running for several weeks, with very slow progress which was thought to be due to the slow turnaround of defects from the development team. The impact of the slow progress meant that the original deadline for SIT to be completed would not be met.

There were a couple of areas that were directly impacting the test team, one of which was that development did not provide visibility of when code was being deployed or which defects had been fixed and when information was provided by development the fix success rate was very low at 10/15 %. This further reduced the confidence level between Development and Test.

Understandably the business were very concerned as the SIT completion deadline would not be met but at that time it wasn’t clear as to why progress was so slow. By using a projection model using the number of defects resolved each day and the total number of open defects it showed that there was at least another 3 weeks before testing would complete, as long as new defects were not found, which would miss the deadline by at least two weeks.

It was clear that something fundamental was wrong but not what, to provide support I flew out to Germany so that I could review the Development teams internal processes to help provide support and guidance.

Prior to arriving in Germany the team had been briefed on my role while I was there, as it was vital that I had their full support. On meeting the team I then tried to understand their current processes and procedures in the following key areas:

- Lines of communication

- Defect resolution

- Code Deployment

For background information the Program Test Manager and SIT Test Manager also worked for Planit which enabled very open discussions around the current status.

On arrival at the development office it seemed strange to see a traditional office layout, as all the IT consultancies I have worked for have adopted the open plan approach, the team was separated into several rooms. As I had only been assigned there for two weeks I met with the Dev team first thing in the morning in the main boardroom so that I could introduce myself and try to get a feel for the current situation.

Before arriving in Germany all the Dev team contact information had been provided, so now it was just a matter of putting a face to each of the eight names. The team seemed very approachable at our first meeting and I made it clear that if they had any queries they were to let me know asap and that I was there to provide support.

The team in Sydney had already set up a daily defect call with the German development team, with the time difference this was at 7:30am German time, 3:30pm Sydney time, at this call myself and the Dev Lead attended to discuss the top ten defects that the test team needed resolving. The current process was for the Dev lead to assign the discussed defects to each of the developers, not in a joint meeting but by walking into each of the meeting rooms and discussing with the development team one by one.

During further discussions it became clear that the team currently didn’t have any daily meetings, so my first action was to set up a 9am, to provide feedback from the 7:30am Sydney con call and a 4pm where the Dev team could provide an update on the days progress.

To support testing progress the test team and development were using JIRA which was being used to store test scripts, track execution, manage defects and generate reports. To provide an overview of the current testing progress a dashboard had been created in JIRA providing a view of SIT test execution. This was my logical start to drive my first 4pm meeting as all the Dev team had access to JIRA and when defects were assigned to them they could be viewed in their to do list. But once I started working through the dashboard I found problems, where it did an excellent job of providing a view of test progress and identified a breakdown of all defects by status, it didn’t provide a view of where the defects were sitting.

Fortunately the Test Manager provided a defect report at the end of each day, where each of the defects assigned to the German Development team were identified, the report also split the defects into new, retested but failed, retested and passed and outstanding defects.

To be able to drive the 4pm meeting I did a defect extract from JIRA into excel, once extracted I then filtered out all the defects that were rejected or closed and any that where not assigned to a member of the Development team. As part of the extraction process JIRA includes a hyperlink for each defect so that with one click JIRA can be launched to provide the defect details. I inserted a column for notes and then had the 4pm meeting with the team updating the new column with any comments, which I then used to create the daily report. This had not been previously received by the test team so quickly provided visibility of the Dev progress as I identified not only which defects had been fixed but for outstanding defects when we were aiming to deliver them.

To ease this process going forward I contacted the JIRA admin team to create a defect filter identifying defect by status by assignee, this then enabled the dev team to not only clearly see how many defects were assigned to them but how many were assigned to the other members of the team. By having this view it enabled the team to self-manage the defect volume assigned to each individual, this also helped from a management perspective and in cases where individuals were on sick leave enabled the team to easily reassign defects.

Lines of Communication

The next area was to look at lines of communication, from my experience if these are not set up clearly a lot of time can be lost, with the test team working remotely from development I started to further investigate how development clarify defects raised, as depending on the level of detail in a defect it can be easily open to interpretation. At this point it became clear that no direct communication had ever happened between the teams, with all communication being via either JIRA or emails.

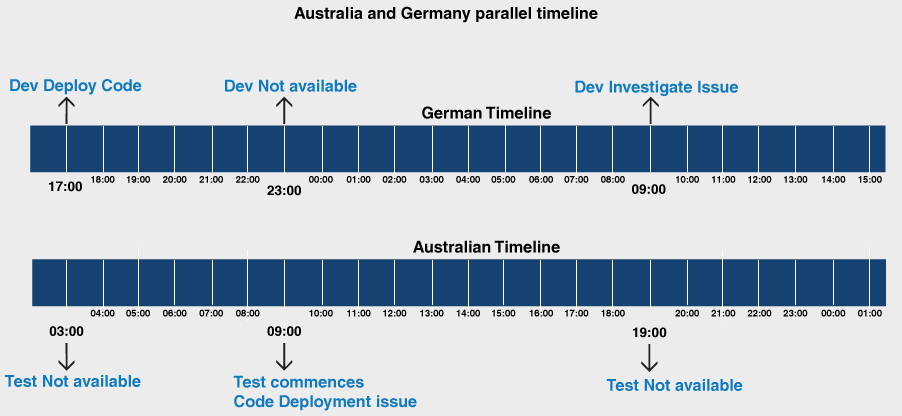

This turned out to be due to a misalignment of working hours, as the German team and Sydney team were both working 9-5 local time, my assumption was that the Development team were working shifts to provide support for testing but this was not the case. I drew up a timeline on a white board to show the alignment between Sydney and Germany and they were 100% out of phase with a 7hr gap between Dev finishing and test starting. By looking at the scenario where Dev deployed the latest build and there is an issue preventing testing from progressing the time line would be as follows:

This meant that for a deployment issue that may take 30 mins to resolve test would lose a full day of testing and the elapsed time before it could be investigated was 15hrs from the original deployment.

To resolve this issue I drew up a timeline across each country to identify the best fit, the time slot selected was for the German team to start at 5am (3pm Syd) and the Sydney team to start at 3pm to give us 8hrs each day where we were overlapped. For the scenario mentioned above, the Dev team would prep the day before then roll out the code at 5am, test would validate with smoke tests and any changes would be applied immediately.

I presented this option initially to the key Dev management, then to the Dev team, talking them through the scenario. The benefits were so obvious that everyone was behind the change and we created a Dev team roster so that not all the team had to come in at 5. I then presented to the Sydney team and again it was accepted and the test team adjusted their start time in line with the Dev team.

Defect Resolution

The next step to further support this change was to set up direct lines of communication for defect resolution now that the teams working hours were aligned, I requested contact numbers from all the test team and set up a process as follows:

- Test would assign a new defect to an assigned individual within the Dev team

- Once a defect was assigned to him this meant that it was ready to be triaged by the whole team so that they could assign to the correct dev team member

- Once the dev team member had been identified they would then call the tester to further discuss the issue and validate the steps taken.

- Both the Dev and Test team were taken through the process which worked exceptionally well. We found that as we were delivering a new system that quite a few issues turned out to be related to the testers understanding of the system, by having direct communication it enabled efficient knowledge transfer between the teams.

Code Deployment

Although we now had good communication lines, we still faced the issue of building confidence between the teams and the only way that this could be achieved was by improving the quality of the code delivery’s, previously only 10/15% of defect fixes passed retest.

To address this I set up an internal QA, where any defect fixed by the development team was assigned to me in JIRA, this meant that it was ready for a walk through, I would then sit with the developer so that they could walk me through the test. It was only when this had been completed that the defects were assigned back to the Test team for a retest. Within several days of starting this process we moved the retest pass rate from 10/15% to 100%, thus starting to gain the confidence of the test team and senior management.

The final piece in the puzzle was the code deployment, previously no visibility had been provided of when new code was available, after further investigation I found that the dev team were making continuous drops throughout the day as each defect was fixed.

During our daily meetings we addressed this and agreed that there would initially only be one build at the end of the day. To accompany the build the dev team would also send an email to their colleagues who had flown out to Sydney as there was some on site configuration required to support the build. Now we had a repeatable process by which all parties were fully informed on the current status. I also followed this up with a call the Test Manager in Sydney just after deployment to make sure that everything was OK and there weren’t any issues.

Once the single drop per day became stable we then also moved into a position where we could deliver a second drop, the first drop was delivered at 5am, then at 9am I would meet with the Dev team to understand which defects had been fixed, then I would call the TM. It was his decision whether he wanted the drop based on Test progress and the number of Test Cases the additional defect fixes would unblock.

Conclusion

The processes identified above were applied within the first week of being on site in Germany, the team (Test & Dev) made fantastic progress and we managed to complete testing 1 week ahead of the projected schedule which was an immense achievement.

When working with a distributed team, collaboration is the key, if the process isn’t working then the all sides need to be investigated to understand why. Although the changes mentioned were mainly from the perspective of Development, it was only by implementing on Dev and Test that the real benefits were felt.

So to answer the question “Can a distributed team work as efficiently as a team based in a central location?” The answer is yes but it relies heavily on the implemented procedures and direct lines of communication between the teams.

Download Whitepaper