The answer to this question isn’t performance testing, DevOps or Agile. The answer is that there is no odd one out, because all three should all work together.

The Problem

Performance testing has traditionally been shunted to the end of the project lifecycle where it has been seen as a must-have ‘tick in the box’, rather than an essential type of testing critical to the final decision of whether the project should go live. It is almost always squeezed into a shorter timeframe than originally planned, as the development and functional testing overruns and the go live date doesn’t change. With this approach, when problems are found so close to go live, it can be very expensive to fix them.

The Main Issues:

- Performance is not really considered by the project until the performance tests are run

- Performance is not always considered by functional testers

- Performance issues are not found until the end

- No time to address performance issues properly

- Performance fixes are expensive to fix at the end of the development process

Performance testers have been campaigning for a long time to have performance testing considered throughout the development lifecycle. The problem is not just one of timing, it is a problem of projects not seeing the benefit of considering performance testing as part of the whole development lifecycle. In not doing so, they don’t realise the tangible benefits it can give. Too often projects push performance testing to the last minute where they have to pay for the tests but have little or no time to do something about the problems raised. All the pain and none of the gain.

How the performance tester feels:

Figure 1: How the performance tester feels between Development & Test and Go Live

When Agile and DevOps arrived, it seemed like the perfect opportunity to resolve this problem once and for all. The foundation of Agile combined with DevOps is that every discipline is involved throughout the project to produce a better product.

So I asked, “Where does performance testing fit in Agile?”

The response I got back was: “In a performance hardening iteration at the end”. This sounded depressingly familiar.

The Thinking

One of our customers was keen to work with us and do things better. Together, we looked for a practical strategy to build in a performance testing element throughout the System Development Life Cycle (SLDC). The team were helped by the customer to some extent, as the customer favoured the DevOps shift left model. The team had already created an automated functional smoke test, followed by an automated functional regression test as part of the integrated release process and it seemed a natural progression that we should include a performance testing element.

The belief was that you needed to performance test a production-like load on a production-like environment for it to have any meaningful value. Anything less could be considered a waste of time, as the complexity of the system and the number of variables makes it practically impossible to interpret the results in relation to what you see in a production-like environment. The thinking was that testing earlier in the SDLC doesn’t work for a number of reasons:

- You don’t have the code that is actually going into production, as it will continue to change

- You don’t have all the functionality to put a live-like load on the system, as it hasn’t all been developed

- You generally don’t have a live-like environment in the early stages of a project

- You don’t always have the full testing analysis completed (all scenarios, user volumes, etc.)

The perfect option to test earlier in the Agile SDLC with a production-like environment and a production-like load doesn’t fit. So what could be done?

Benchmarking came up as an option. Benchmarking is about running the same test before and after changes have been made and comparing results to identify an impact. However, a benchmark test does not have to be a production-like load. People tend to assume that a benchmark will tell them if they can go live or not, but as 99.9% of benchmarks are not “production-like” in terms of the transaction mix or volumes, the results are not going to be indicative of performance in production. This has not stopped some projects from doing transaction-specific benchmarks as their only performance testing, discovering when it goes live that the system doesn’t cope with the combined load.

The solution was to utilise simple benchmarking tests as part of the answer, but it was not the complete solution.

The Solution

Part A of the Solution:

The project, although not pure Agile, was broken down into several phases with each phase made up of a number of 3 week sprints. At the beginning of each phase there was a short window to plan and organise the phase. Each phase was targeted at part of the overall functionality and it was not until all phases were complete that the product would be ready to be released into production. The initial phases focused on developing web services and later moved to developing the GUI’s that would consume them.

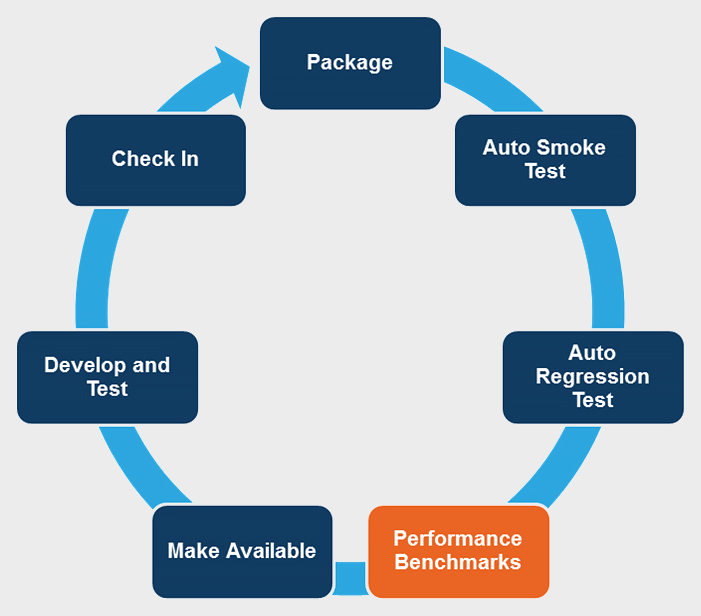

The project started by building a number of web services. We had put together an automated functional smoke test followed by an automated functional regression test as part of the continuous integration cycle. So taking that one step further we introduced simple performance benchmarking of the web services.

Figure 2: Continuous Integration Cycle

These benchmarks were not targeted at production-like volumes but were scaled to give a reasonable load for the available infrastructure, with only basic response time information being collated. The aim of these tests were to get an idea of performance but it was important that the tests did not take a long time to setup and run, unduly slowing the whole continuous integration process down.

These initial tests proved valuable from the start, with the results showing that the web services response times were not responding fast enough to meet the requirements, even under low usage. This enabled developers to revise the underlying design very early on in the process and the response times dropped to well under the stated requirements.

In the same way that the automation smoke and regression tests were added to and maintained, the performance test benchmarks were also kept current throughout the SDLC.

This process worked well and highlighted numerous occasions where the code wasn’t performant or a change had adversely affected the performance. This proved very efficient as degradation in performance could usually be attributed to the last set of changes, which meant that identifying the root cause of the problem and fixing it was generally straightforward.

The benchmarks also highlighted a couple of instances where previously identified and resolved performance issues were reintroduced as a result of the old code being picked up and packaged. This was not strictly a performance issue, but one of version control and code management, which was highlighted early by the benchmarks saving a large amount of rework. So not only did the tests help improve the code, they also identified issues with the release process.

As the GUI was released, we developed benchmarks based around the key business transactions and included these in the performance benchmarking suite.

The benchmarks added real value but they didn’t give us the whole story.

Part B of the Solution:

Even with the benchmarks, we still would not know how the system would perform in production-like conditions. So the second part of the solution was to run some performance hardening iterations. These are windows where production-like loads are placed on the system.

Figure 3: Project Build Structure

We ran a performance hardening iteration at the end of the second to last phase and another at the very end of the last phase. The end of the second to last phase was identified as the earliest point a production-like environment would be available for testing, and we felt that enough of the application was developed to give the test results real value.

These production-like performance tests were set up very quickly and the execution window was short for the following reasons:

- We had a full understanding of the business transactions and their test data needs gained while developing the benchmarking

- We had reusable test assets from the benchmarks

- We had established the relationships with the support people already in place and had a proven effective process to address identified issues. This had been developed and refined during the benchmarking

- A number of performance issues were identified and resolved during the benchmarking and before the performance hardening sprints

No major performance issues were found during the hardening iterations. This was in contrast to the significant number found in the benchmarking.

Costs

This is a difficult area to quantify. Performance testing skills were required at various points throughout the SDLC rather than just at the end. The windows required at the end were considerably shorter than a more traditional approach because of the assets and IP developed during the early engagement and the reduced number of performance issues. Performance issues were discovered much earlier in the project life cycle and it was much easier to find the root cause and resolve them, resulting in cost savings and better value for the client.

Quality

Quality improved throughout the project as performance issues were identified early enough for designs to change and issues to be addressed properly. Performance was an early design consideration and as a result, performance was designed into the solution. In contrast, if significant performance issues are found at the end of a project there is not always time or resources to resolve the issue properly. In some cases, the problems have to be lived with, or the problem is masked by scaling the system. In either case, the problem is still there and that quality debt will follow the system throughout its life until it is resolved or the system is decommissioned.

Conclusion

The application was delivered faster and with better quality given the approach we adopted. Early performance benchmarks highlighted performance issues when they were cheaper to fix and had the added advantage of focusing the whole team in the performance area. As a result, the time required to setup and run the production-like performance test was reduced.

One size never fits all and if this solution doesn’t work for your project, don’t give up and accept a window at the end. Work with the project to come up with a solution that does the job. Agile and DevOps is about engaging the right people earlier and this includes performance testers.